Small Action Models Are the Future of AI Agents

2025 is the year of agents, and the key capability of agents is calling tools.

When using Claude Code, I can tell the AI to sift through a newsletter, find all the links to startups, verify they exist in our CRM, with a single command. This might involve two or three different tools being called.

But here’s the problem: using a large foundation model for this is expensive, often rate-limited, and overpowered for a selection task. It’s like using a Ferrari to deliver pizza. The computational overhead makes no economic sense when the primary task is choosing the right tool, not performing complex reasoning.

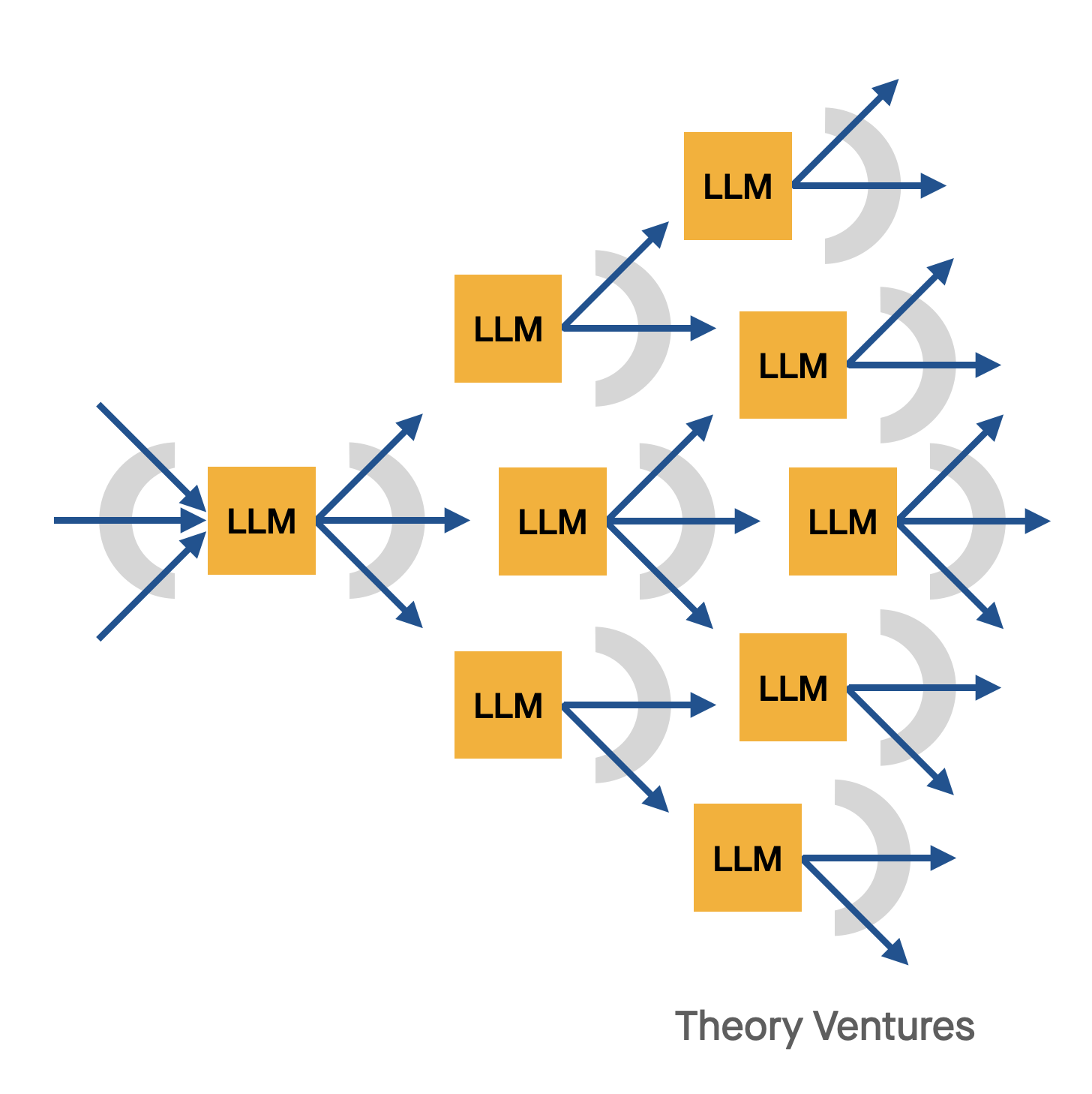

This raises a fundamental architecture question: what’s a better way to build agent systems that can efficiently orchestrate multiple tools without breaking the bank?

The answer lies in small action models. NVIDIA released a compelling paper arguing that “Small language models (SLMs) are sufficiently powerful, inherently more suitable, and necessarily more economical for many invocations in agentic systems.” This isn’t just theoretical—it’s playing out in practice across enterprise deployments.

I’ve been testing different local models to validate this approach. Started with a Q1 330 billion parameter model, which works but runs painfully slow. Then I shifted to Salesforce’s xLAM model, a large action model specifically designed for tool orchestration. The performance on my Mac M2 Pro is ideal—fast inference with excellent tool selection accuracy.

This experiment revealed a fundamental trade-off in agent architecture: how much intelligence should live in the model versus in the tools themselves. With larger models like QN, tools can be simpler because the model has better error tolerance and can work around poorly designed interfaces. The model compensates for tool limitations through brute-force reasoning.

With smaller models, you need better error correction in tool selection. The model has less capacity to recover from mistakes, so the tools must be more robust and the selection logic more precise. This might seem like a limitation, but it’s actually a feature.

This constraint eliminates the compounding error rate of LLM chained tools. When large models make sequential tool calls, errors accumulate exponentially. Each mistaken tool selection reduces the probability of overall task success. The mathematics of this compounding error show why even 95% accuracy per step leads to system failure over long chains.

Small action models force better system design. They require deterministic tool interfaces, clear error handling, and robust fallback mechanisms. The result is more reliable agent behavior, not less.

Consider the architectural implications across different deployment models:

[Space for 2x2 matrix comparing cloud vs on-prem and large vs small models]

Enterprise software companies are already seeing this pattern. Small action models running locally can handle 80% of tool orchestration tasks while large models stay reserved for complex reasoning that truly requires their capabilities. This hybrid approach delivers both cost efficiency and performance optimization.

The economic advantages become compelling at scale. Local inference costs essentially zero per call, while API costs accumulate quickly across thousands of agent interactions. For companies building agent-powered products, this cost difference determines product viability.

As 2025 becomes the year of agents, the winning architecture combines small action models for tool selection with deterministic, well-built tools for execution. This isn’t just a technical optimization—it’s the foundation for scalable enterprise AI systems that work reliably in production environments.

The 1-minute read that turns tech data into strategic advantage.

Read by 150k+ founders & operators.